Yearly Archives: 2014

Jun 30, 2014 Ted Sichelman

A recent American Bar Association “Corporate Counsel” seminar styled itself as “The Uncertain Arena: Claims for Damages and Injunctive Relief in the Unpredictable World of IP Litigation.” The seminar began by recounting the seemingly surprising, $1 billion-plus damage awards in the patent infringement actions, Carnegie Mellon v. Marvell Technology, Apple v. Samsung, and Monsanto v. DuPont. These blockbuster awards stand in stark contrast to the usual awards of $20 million or less in a typical case.

By and large, in-house counsel have chalked up much of these differences to the luck of the draw. Such a sentiment is all-too-common not only among practitioners, but also among policymakers and academics. No less than the eminent IP scholar Mark Lemley has remarked, “Patent damages are unpredictable because the criteria most commonly used are imprecise and difficult to apply.”

Mazzeo, Hillel, and Zyontz make an impressive contribution to the literature by casting substantial doubt on such views. Specifically, in their recent empirical study of district court patent infringement judgments between 1995 and 2008, they show that patent damages can be explained in a large part by a fairly small number of patent-, litigant-, and court-related factors.

The authors assembled a set of over 1300 case outcomes from the PricewaterhouseCoopers database, which they boiled down to 340 judgments in favor of the patentholder in which award details were available. Although this number of judgments may seem low, based on independent work of my own for a study on the duration of patent infringement actions, these counts represent a high percentage of the total number of actions and judgments. Thus, it is unlikely that including the unavailable judgments and awards in the dataset would substantially change their results.

Mazzeo, Hillel, and Zyontz begin their exposition by noting—contrary to the widespread view that patent damages awards are shockingly high—that the median damage award has remained fairly constant from 1995 through 2008, at roughly a low $5 million. The billion-dollar damage awards in Apple v. Samsung and other cases are thus extreme outliers. Indeed, during the time period at issue, only eight awards came in over $200 million, yet those awards accounted for 47.6% of collective damages of all cases (other than generic-branded pharmaceutical disputes under the Hatch-Waxman Act). So, outside of a small number of highly publicized, blockbuster cases, damages awards are (perhaps shockingly) low – a fact that flies in the face of current rhetoric about outsized awards in patent cases.

The most impressive aspect of the article is the authors’ empirical models explaining roughly 75% of the variation among damages awards. In particular, they assemble various factors—including the number of patents asserted, the age of the patents, the number of citations to the patents, whether the defendant is publicly traded, and whether a jury or judge assessed damages—and construct a regression model that shows statistically significant relationships between these factors and the amount of damages awarded.

For example, in one model, if the defendant was publicly traded, damages were roughly 1.5 times higher than when the defendant was privately held, controlling for other factors. What is particularly striking is that the outlier awards—namely, those above $200 million—fall squarely within the model’s explanatory power. Thus, rather than being the random results of rogue juries, these large damage awards likely reflect a variety of measurable factors that point in favor of larger awards across the large number of cases confronted by the courts.

These findings have important public policy implications. As the authors point out, stable, predictable damage awards are essential for a properly functioning patent system. Otherwise, the careful balance of incentives to patentees to innovate and incentives to third parties either to acquire licenses to patented inventions or invent around would be thwarted.

On the other hand, Mazzeo, Hillel, and Zyontz overreach by concluding that their “findings thus bolster the core tenets of the patent system” that exclusive patent rights are an appropriate means for protecting inventions. Specifically, the authors’ argument that “several of the driving factors correspond to accepted indicators of patent quality” is insufficient to support such an assertion, because these factors—such as forward citations, number of claims, and number of patents—are accepted indicators of a patent’s economic “value,” not a patent’s “quality,” which concerns its validity. (Although there is very likely a relationship between the two notions, no study has resoundingly linked patent value to patent quality.) And typically these value indicators have been derived from studies of patent litigation. Thus, to argue that high damages in litigation justify the patent system on the basis of such metrics is essentially circular. Indeed, as I have argued elsewhere, it is very likely that patent damages as they stand should be reengineered to provide more optimal innovation incentives.

Nonetheless, despite this study’s inability to “bolster the core tenets of the patent system,” its result that damages awards are fairly predictable is a very important contribution to the literature. Moreover, this work provides the starting point for more comprehensive investigations of damages in patent cases, such as the follow-on study the authors recently undertook regarding non-practicing entity (NPE) and non-NPE suits. Additionally, their explanatory approach could be extended to the more basic win/loss determinations on infringement and validity. One cannot ask for much more in any empirical study, and Mazzeo, Hillel, and Zyontz deserve kudos for their exacting labors and notable insights.

Cite as: Ted Sichelman,

Are Patent Damages Uncertain?, JOTWELL

(June 30, 2014) (reviewing Michael Mazzeo, Jonathan Hillel, & Samantha Zyontz,

Explaining the “Unpredictable”: An Empirical Analysis of Patent Infringement Awards, 35

Int’l Rev. of L. & Econ. 58 (2013)),

https://ip.jotwell.com/are-patent-damages-uncertain/.

May 28, 2014 Stacey L. Dogan

Graeme B. Dinwoodie,

Secondary Liability for Online Trademark Infringement: The International Landscape,

36 Colum. J.L. & Arts (forthcoming 2014), available at

SSRN.

Although we live in a global, interconnected world, legal scholarship – even scholarship about the Internet – often focuses on domestic law with little more than a nod to developments in other jurisdictions. That’s not necessarily a bad thing; after all, theoretically robust or historically thorough works can rarely achieve their goals while surveying the landscape across multiple countries with disparate traditions and laws. But as a student of U.S. law, I appreciate articles that explain how other legal systems are addressing issues that perplex or divide our scholars and courts. Given the tumult over intermediary liability in recent years, comparative commentary on that topic has special salience.

In this brief (draft) article, Graeme Dinwoodie explores both structural and substantive differences in how the United States and Europe approach intermediary trademark liability in the Internet context. To an outsider, the European web of private agreements, Community Directives, CJEU opinions, and sundry domestic laws can appear daunting and sometimes self-contradictory. Dinwoodie puts them all into context, offering a coherent explanation of the interaction between Community law, member state law, and private ordering, and situating the overall picture within a broad normative framework. And he contrasts that picture with the one emerging through common law in the United States. The result is a readable, informative study of two related but distinct approaches to intermediary trademark law.

Dinwoodie begins by framing the core normative question: how should the law balance trademark holders’ interest in enforcing their marks against society’s interest in “legitimate development of innovative technologies that allow new ways of trading in goods”? This tension is a familiar one: from Sony through Grokster, from Inwood through eBay, courts and lawmakers have struggled with how to allocate responsibility between intellectual property holders, those who infringe their rights, and those whose behavior, product, or technology plays some role in that infringement. Dinwoodie identifies the tension but does not resolve it, purporting to have the more modest goal of exposing the differences between the American and European approaches and discussing their relative virtues. But the article barely conceals Dinwoodie’s preference for rules that give intermediaries at least some of the burden of policing trademark infringement online.

Structurally, there are some significant differences between the European and American approaches. Whereas courts have shaped the U.S. law primarily through common law development, Europe has a set of Directives that offer guidance to member states in developing intermediary trademark liability rules. Europe has also experimented with private ordering as a partial solution, with stakeholders recently entering a Memorandum of Understanding (MOU) that addresses the role of brand owners and intermediaries in combating counterfeiting online. In other words, rather than relying exclusively on judge-made standards of intermediary liability, European policymakers and market actors have crafted rules and norms of intermediary responsibility for trademark enforcement.

Whether as a result of these structural differences or as a byproduct of Europe’s tradition of stronger unfair competition laws, the substantive rules that have emerged in Europe reflect more solicitude for trademark owners than provided by United States law. Doctrinally, intermediaries have a superficial advantage in Europe, because the Court of Justice limits direct infringement to those who have used the mark in connection with their own advertising or sales practices. They also benefit from Europe’s horizontal approach to Internet safe harbors. Unlike the United States, Europe includes trademark infringement, unfair competition, and other torts in the “notice-and-takedown” system, offering service providers the same kind of immunity for these infractions as they receive under copyright law. The safe harbor law explicitly provides that intermediaries need not actively root out infringement.

Other features of European law, however, temper the effects of these protections. Most significantly, Article 11 of the European Enforcement Directive requires member states to ensure that “rights holders are in a position to apply for an injunction against intermediaries whose services are used by third parties to infringe an intellectual property right.” In other words, even if they fall within the Internet safe harbor (and thus are immune from damages), intermediaries may face an injunction requiring affirmative efforts to reduce infringement on their service. In Germany, at least, courts have ordered intermediaries to adopt technical measures such as filtering to minimize future infringement. The threat of such an injunction no doubt played a role in bringing intermediaries to the table in negotiating the MOU, which requires them to take “appropriate, commercially reasonable and technically feasible measures” to reduce counterfeiting online.

This explicit authority to mandate filtering or other proactive enforcement efforts finds no counterpart in U.S. law. On its face, U.S. contributory infringement law requires specific knowledge of particular acts of infringement before an intermediary has an obligation to act. And while scholars (including myself) have argued that intermediaries’ efforts to reduce infringement have played an implicit role in case outcomes, the letter of the law requires nothing but a reactive response to notifications of infringement. Dinwoodie suggests that this “wooden” approach to intermediary liability may miss an opportunity to place enforcement responsibility with the party best suited to enforce.

In the end, while professing neutrality, Dinwoodie clearly sees virtues in the European model. He applauds the horizontal approach to safe harbors, welcomes the combination of legal standards and private ordering, and praises the flexibility and transparency of Europe’s largely least-cost-avoider model. Whether the reader agrees with him or prefers the United States’ more technology-protective standard, she will come away with a better understanding of the structure and content of intermediary trademark law in both the United States and Europe.

May 2, 2014 Christopher J. Buccafusco

One of the central tensions in the institutional design of innovation regimes is the trade-off between incentives and disclosure. Innovation systems, including intellectual property systems, are created to optimize creative output by balancing ex ante incentives for initial creators with ex post disclosure of the innovation to follow-on creators and the public. According to accepted theory, the more rigorous the disclosure—in terms of when and how it occurs—the weaker the incentives. But a fascinating new experiment by Kevin Boudreau and Karim Lakhani suggests that differences in disclosure regimes can affect not just the amount of innovation but also the kind of innovation that takes place.

Boudreau and Lakhani set up a tournament on the TopCoder programming platform that involved solving a complicated algorithmic task over the course of two weeks. All members of the community were invited to participate in the tournament, and contest winners would receive cash prizes (up to $500) and reputational enhancement within the TopCoder community. The coding problem was provided by Harvard Medical School, and solutions were scored according to accuracy and speed. Importantly, the top solutions in the tournament significantly outperformed those produced within the medical school, but that’s a different paper.

Boudreau and Lakhani randomly assigned participants into different conditions based on varying disclosure regimes and tracked their behavior. The three disclosure conditions were:

- Intermediate Disclosure – Subjects could submit solutions to the contest, and, when they did, the solutions and their scores were immediately available for other subjects in the same condition to view and copy.

- No Disclosure – Subjects’ solutions to the contest were not disclosed to other subjects until the end of the two-week contest.

- Mixed – During the first week of the contest, submissions were concealed from other subjects, but, during the last week of the contest, they were open and free to copy.

For the Intermediate and Mixed conditions, subjects were asked to provide attribution to other subjects’ whose code they copied.

Cash prizes were given out at the end of the first and second weeks based on the top-scoring solutions. For the Intermediate condition, the prizes were split evenly between the subject who had the highest scoring solution and the subject who received the highest degree of attribution.

The subjects were about equally split between professional and student programmers, and they represented a broad range of skill levels. 733 subjects began the task. Of them, 124 submitted a total of 654 intermediate and final solutions. The solutions were determined to represent 56 unique combinations of programming techniques.

The authors predicted that mandatory disclosure in the Intermediate condition would reduce incentives to participate because other subjects could free-ride on the solutions of initial inventors. The data are consistent with this hypothesis: Fewer people submitted answers in the Intermediate condition than in the No Disclosure condition, and the average number of submissions and the number of self-reported hours worked were also lower by significant margins. The Mixed condition generally produced data that were between the other two conditions. Ultimately, scores in the Intermediate condition were better than those in the other conditions because subjects could borrow from high-performing solutions.

More importantly, the data also disclosed differences in how subjects solved the problem. Consistent with the authors’ hypotheses, subjects in the Intermediate condition tried fewer technical approaches and seemed to experiment less than did those in the No Disclosure condition. Once significant improvements were disclosed, other subjects in the Intermediate condition tended to borrow the successful code leading to a relatively smooth improvement curve. In the No Disclosure condition, by contrast, although new submissions were generally better than those the subjects had submitted before, they were more variable and less consistent in their improvement.

In summary, when subjects can view each others’ code, innovation tends to be more path-dependent and to happen more rapidly and successfully than when there is no disclosure. But when innovation systems are closed, people tend to participate more, and they tend to try a wider variety of solution strategies.

In previous research, these authors have explained how open-access innovation systems succeed in the face of diminished extrinsic incentives. This experiment provides valuable insight into the relative merits of open- and closed-access systems. Open-access systems will, all else equal, have advantages when creators have significant intrinsic incentives and when the innovation problem has one or few optimal solutions.

Closed-access systems, by contrast, will prove comparatively beneficial when the system must provide independent innovation incentives and when the problem involves a wide variety of successful solutions. The experiment’s contribution, then, is not to resolve the debate about open versus closed innovation but rather to help policymakers and organizations predict which kind of system will tend to be most beneficial.

The experiment also suggests helpful ways of thinking about the scope of Intellectual Property rights in terms of follow-on innovation. For example, strong derivative-works rights in copyright law create a relatively closed innovation system compared to patent law’s regime of blocking patents. If we think of the areas of copyright creativity as exhibiting a large variety of optimal solutions, then the closed-innovation system may help prevent path-dependence and encourage innovation (evidence from the movie industry not withstanding). Future research could test this hypothesis.

As with any experiment, many questions remain. Boudreau and Lakhani’s incentives manipulation is not as clean as could be hoped, both because payouts in the Intermediate condition are lower and because attribution in the No Disclosure condition is effectively unavailable. Accordingly, it is difficult to make causal arguments about the relationship between the disclosure regime and incentives. In addition, although the Intermediate condition produces lower participation incentives for subjects who expect to be high performing, it creates higher participation incentives for subjects who expect to be low performing because they can simply borrow from high-scoring submissions at the end of the game.

Interestingly, there seems to be surprisingly little borrowing, which could suggest a number of curious features about the experiment: Perhaps only high-skill subjects are capable of borrowing and/or there may be social norms against certain kinds of borrowing even though it is technically allowed. And, as always, there are questions about the representativeness of the sample. Subjects were likely disproportionately men, and they were also likely ones with significant open-source experience where they may have internalized the norms of that community. On the other hand, TopCoder bills itself as “A Place to Compete,” which may have primed competitive behaviors rather than sharing behaviors.

Ultimately, Boudreau and Lakhani have produced an exciting new contribution to intellectual property and innovation research.

Mar 31, 2014 Christopher J. Sprigman

Paul Heald,

The Demand for Out-of-Print Works and Their (Un)Availability in Alternative Markets (2014), available at

SSRN.

Back in mid-2013, Paul Heald posted to SSRN a short paper that already has had far more impact than academic papers usually have on the public debate over copyright policy. That paper, How Copyright Makes Books and Music Disappear (and How Secondary Liability Rules Help Resurrect Old Songs), employed a clever methodology to see whether copyright facilitates the continued availability and distribution of books and music. Encouraging the production of new works is, of course, copyright’s principal justification. But some have contended that copyright is also necessary to encourage continued exploitation and maintenance of older works. We find an example in the late Jack Valenti, who, as head of the Motion Picture Association of America, in 1995 made the argument before the Senate Judiciary Committee that it was necessary to extend the copyright term in part to provide continued incentives for the exploitation of older works. “A public domain work is an orphan,” Valenti testified. “No one is responsible for its life.” And of course if no one is responsible for keeping a creative work alive, it will, Valenti suggests, die.

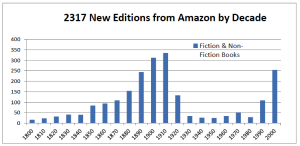

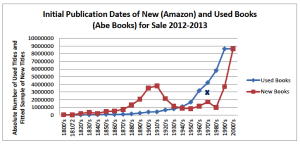

Is that argument right? Enter Paul Heald. Heald’s 2013 article employs a set of clever methodologies to test whether copyright did, indeed, facilitate the continued availability of creative works—in Heald’s article, books and music. With respect to books, Heald constructed a random sample of 2300 books on Amazon, arranged them in groups according to the decade in which they were published, and counted them. Here are his findings:

© 2013 by Paul Heald. All rights reserved. Reprinted with permission of Paul Heald.

If you hadn’t already seen Heald’s article, the shape of this graph should surprise you. You would probably expect that the number of books from Amazon would be highest in the most recent decade, 2000–2010, and would decline continuously as one moves to the left in the graph—i.e., further into the past. On average, books are, all things equal, less valuable as they age, so we should expect to see fewer older books on Amazon relative to newer ones.

But that’s not what we see. Instead, we see a period from roughly 1930 to 1990, where books just seem to disappear. And we see a large number of quite old books on Amazon. There are many from the late-19th century and the first two decades of the 20th century. Indeed, there are far more new editions from the 1880s on Amazon than from the 1980s.

What on earth is causing this odd pattern? In a word: copyright. All books published before 1923 are out of copyright and in the public domain. And a variety of publishers are engaging in a thriving business of publishing these out-of-copyright works—and so they’re available on Amazon. In contrast, a large fraction of the more recent works—the ones under copyright—simply disappear. Maybe they’ll spring back to life when (or if?) their copyright expires. But for now, copyright doesn’t seem to be doing anything to facilitate the continued availability of these books. In fact, copyright seems to be causing some books to disappear.

Heald does a similar analysis for music, and this analysis too shows that copyright causes music to disappear, relative to music in the public domain. The effect is less pronounced than in the case of books, but it is still there.

In short, Heald’s paper placed a big question mark after the “continued availability” justification for copyright. If we care about works remaining available, then copyright, in fact, seems to be hurting and not helping.

Now Heald is back with a follow-up paper, The Demand for Out-of-Print Works and Their (Un)Availability in Alternative Markets, that takes on the most important question raised by his first: Should we be concerned that copyright appears to make works disappear? If there is no consumer demand for these disappeared works, then possibly not. But if there is consumer demand for the works that copyright kills, then we should care because that demand is not being met.

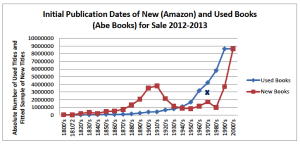

Heald employs a number of tests to determine whether there is consumer demand for the books that copyright makes disappear. Read the article if you want a full account, but it is worthwhile to give a couple of highlights. In a particularly nifty part of the paper, Heald compares books available on Amazon with those available on the biggest used books website. The graph is instructive:

© 2014 by Paul Heald. All rights reserved. Reprinted with permission of Paul Heald.

That gap between the red (Amazon) and blue (used book) curves suggest that used book sellers take advantage of a market in many books that copyright has made disappear from new book shelves, which suggests that there is consumer demand for these books.

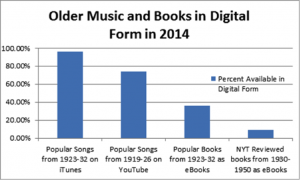

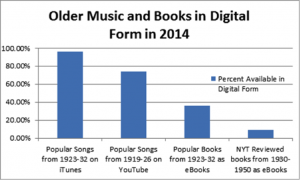

Heald then examines other possible ways that the market may provide access to works that copyright has made disappear. For music, Heald looks to see whether copyright owners are digitizing out-of-print records and either selling them on iTunes or posting them on YouTube. The answer, hearteningly, appears to be yes. Unfortunately, the picture for books is much less reassuring. As usual, Heald’s chart speaks more clearly than words:

© 2014 by Paul Heald. All rights reserved. Reprinted with permission of Paul Heald.

Look at the number of popular songs from 1923–32 that are on iTunes—almost all of them. But then look at the number of popular books from the same period that are offered as eBooks—less than 40%. Many of these books are not available on Amazon in paper form. Nor are they distributed digitally.

So why the difference between the music and book publishing industries when it comes to the availability of older titles still under copyright? I’ll leave that as a mystery—and I hope your unslaked curiosity will lead you to read Heald’s article. It is well worth your time.

Feb 28, 2014 Pamela Samuelson

Sony’s Betamax was the first reprography technology to attract a copyright infringement lawsuit. Little did copyright experts back then realize how much of a harbinger of the future the Betamax would turn out to be. Countless technologies since then designed, like the Betamax, to enable personal use copying of in-copyright works have come to market. Had the Supreme Court outlawed the Betamax, few of these technologies would have seen the light of day.

The most significant pro-innovation decision was Supreme Court’s Sony Betamax decision. It created a safe harbor for technologies with substantial non-infringing uses. Entrepreneurs and venture capitalists have heavily relied on this safe harbor as a shield against copyright owner lawsuits. Yet, notwithstanding this safe harbor, copyright owners have had some successes in shutting down some systems, most notably, the peer-to-peer file-sharing platform Napster.

It stands to reason that decisions such as Napster would have some chilling effect on the development of copy-facilitating technologies. But how much of a chilling effect has there been? Some would point to products and services such as SlingBox and Cablevision’s remote DVR feature and say “not much.”

Antitrust and innovation scholar Michael Carrier decided to do some empirical research to investigate whether technological innovation has, in fact, been chilled by decisions such as Napster. He conducted qualitative interviews with 31 CEOs, co-founders and vice presidents of technology firms, venture capitalists (VCs), and recording industry executives. The results of his research are reported in this Wisconsin article, which I like a lot.

One reason I liked the article was because it confirmed my longstanding suspicion that the prospect of extremely large awards of statutory damages does have a chilling effect on the development of some edgy technologies. Because statutory damages can be awarded in any amount between $750 and $150,000 per infringed work and because copy-facilitating technologies can generally be used to interact with millions of works, copyright lawsuits put technology firms at risk for billions and sometimes trillions of dollars in statutory damages. For instance, when Viacom charged YouTube with infringing 160,000 works, it exposed YouTube and its corporate parent Google to up to $24 billion in damages. While a company such as Google has the financial resources to fight this kind of claim, small startups are more likely to fold than to let themselves become distracted by litigation and spend precious VC resources on lawyers.

But a better reason to like the article is the fascinating story Carrier and his interviewees tell about the mindset of the record labels about Napster and the technology “wasteland” caused by the Napster decision.

The lesson that record labels should have learned from Napster’s phenomenal (if short-lived) success was that consumers wanted choice—to be able to buy a single song instead of a whole album—and if it was easy and convenient to get what they wanted, they would become customers for a whole new way of doing business. Had the record labels settled with Napster, they would have benefited from the new digital market and earned billions from the centralized peer-to-peer service that Napster wanted to offer.

The labels were used to treating record stores as their customers, not the people who actually buy and play music. Radio play, record clubs, and retail were the focus of the labels’ attention. They thought that the Internet was a fad, or a problem to be eradicated. They were unwilling to allow anyone to create a business on the back of their content. They believed that if they didn’t like a distribution technology, it would go away because they wouldn’t license it. They coveted control above all. When the labels began to venture into the digital music space themselves, they wanted to charge $3.25 a track, which was completely unrealistic.

Some of Carrier’s interviewees thought that the courts had reached the right decision in the Napster case, but questioned the breadth of the injunction, which required 100% effectiveness in filtering out infringing content and not just the use of best efforts, thereby making it impossible to do anything in the digital music space. One interviewee asserted that in the ten years after the Napster decision, iTunes was the only innovation in the digital music marketplace. Many more innovations would have occurred but for the rigidity of the Napster ruling and the risk of personal liability for infringement by tech company executives and VCs.

The role of copyright in promoting innovation was recently highlighted in the Department of Commerce’s Green Paper on “Copyright Policy, Creativity and Innovation in the Digital Economy” (July 2013). It aspires to present a balanced agenda of copyright reform ideas that will promote innovation. It is an encouraging sign that the Green Paper identifies statutory damage risks in secondary liability cases as a policy issue that should be addressed. Reforming statutory damages would not entirely eliminate the risks that copyright would chill innovation, but it would go a long way toward that goal.